Cross-lingual Expectation Projection for Minimally-Supervised Learning

Overview

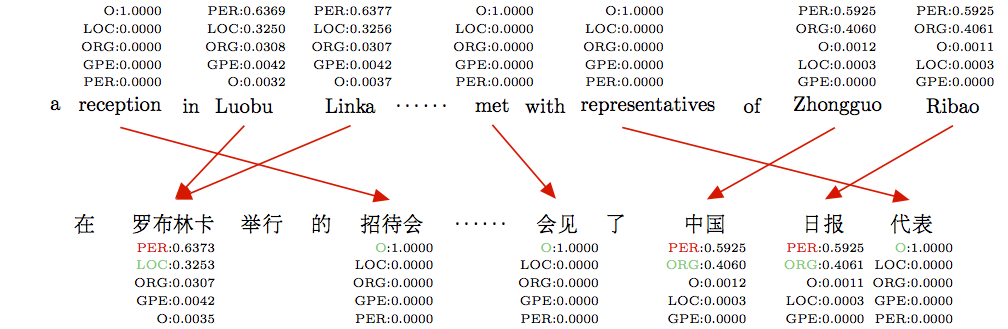

We consider a multilingual weakly supervised learning scenario where knowledge from an- notated corpora in a resource-rich language is transferred via bitext to guide the learning in other languages. Past approaches project labels across bitext and use them as features or gold labels for training. We propose a new method that projects model expectations rather than labels, which facilities transfer of model uncertainty across language bound- aries. We encode expectations as constraints and train a discriminative CRF model using Generalized Expectation Criteria (Mann and McCallum, 2010).

People

Papers

- Mengqiu Wang and Christopher D. Manning, "Cross-lingual Pseudo-Projected Expectation Regularization for Weakly Supervised Learning", Transactions of ACL, 2013 [pdf],