Dropout Learning and Feature Noising

Overview

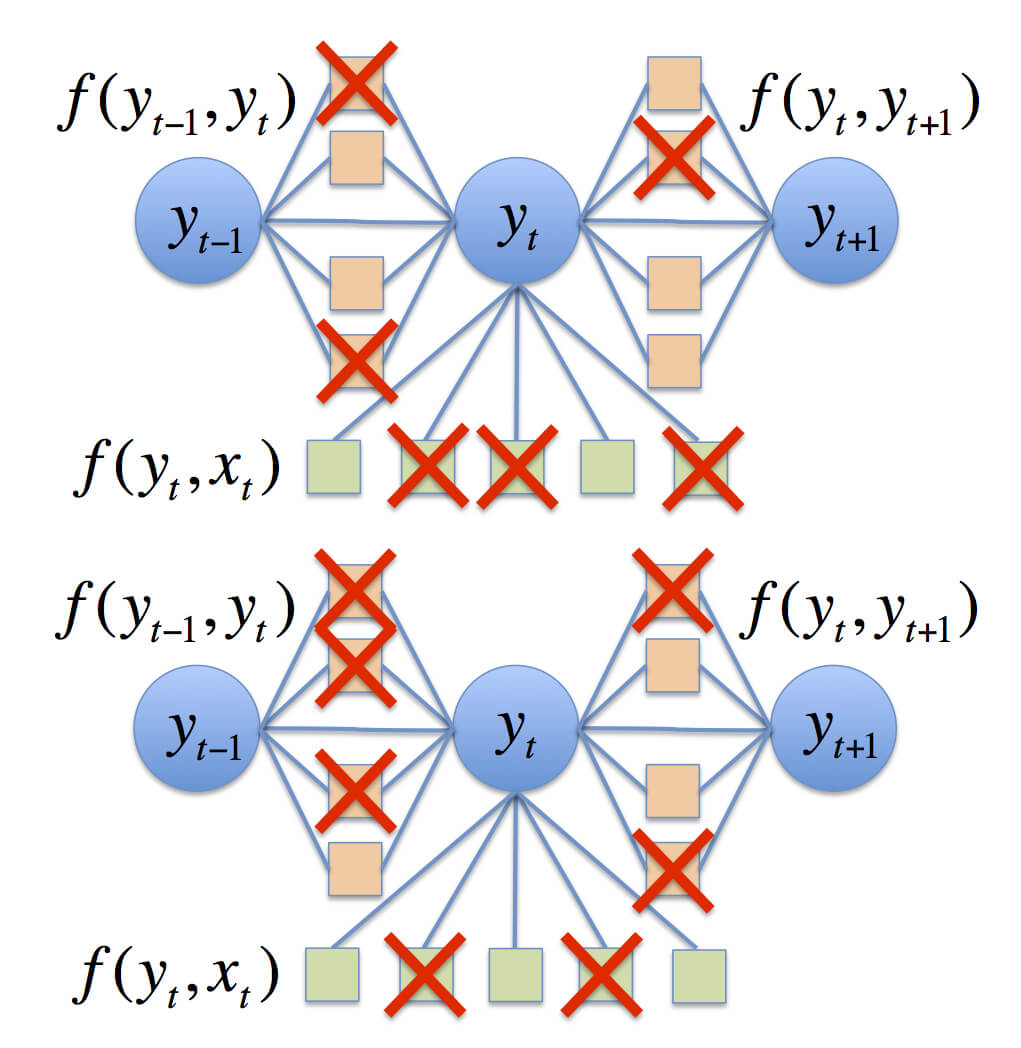

Dropout training (Hinton et al., 2012) attempts to prevent feature co-adaptation in logistic models by randomly dropping out (zeroing) features during supervised training. We show how to speed up dropout training by sampling from or integrating a Gaussian approximation, instead of doing Monte Carlo optimization. We can also show that dropout performs a form of adaptive regularization, and is first-order equivalent to an L2 regularizer applied after scaling the features by an estimate of the inverse diagonal Fisher information matrix. We generalize this regularizer to structured predictions for sequence models, and also demonstrate its applicability to semi-supervised learning.

People

Papers

-

Sida Wang, Mengqiu Wang, Chris Manning, Percy Liang and Stefan Wager, "Feature Noising for Log-linear Structured Prediction".

EMNLP 2013 [pdf] - Stefan Wager, Sida Wang and Percy Liang, "Dropout Training as Adaptive Regularization". NIPS 2013 [pdf]

- Sida Wang and Chris Manning, "Fast Dropout Training". ICML 2013 [pdf]

- Sida Wang and Chris Manning, "Fast Dropout Training for Logistic Regression". NIPS 2012 Workshop on Log-linear Models [pdf]

Contact Information

For any comments or questions, please feel free to email Sidaw at Stanford. edu