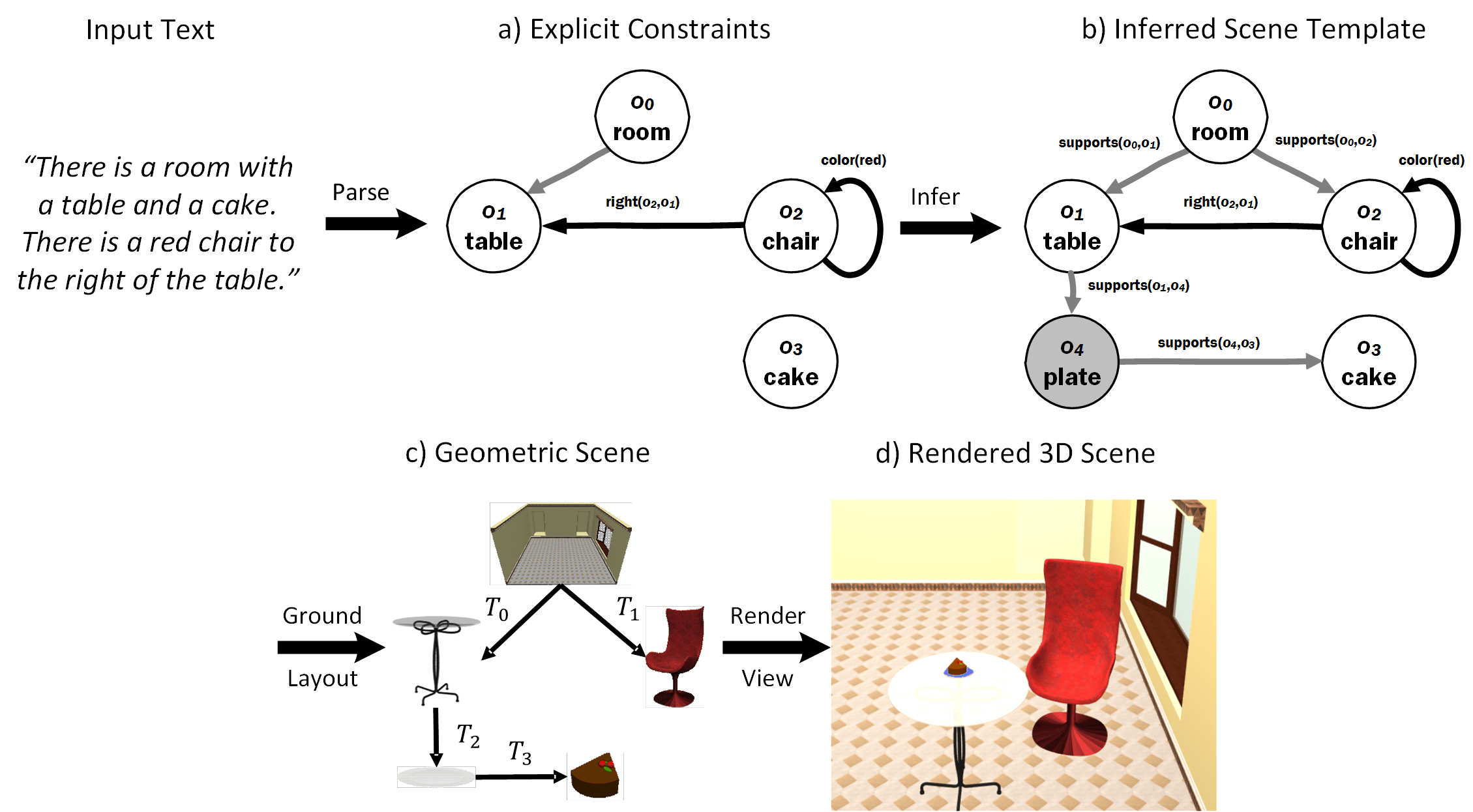

Text to Scene GenerationOverviewThe Text2Scene project aims to explore how to automatically generate 3D scenes from a natural text description. When describing a scene, people will often omit important common sense knowledge about the placement of objects. For instance, it is uncommon for people to state that chairs are usually on the floor and upright, and that you eat a cake from a plate on a table. In this project, we attempt to learn such knowledge from a dataset of scenes and use the learned priors to infer missing constraints when generating a scene.

Online DemoWe have a online demo that illustrates our Text2Scene system. The demo illustrates the inference of the static support hierarchy and basic positioning of objects. Relative position and orientation priors are currently not incorporated in the online demo. Please be patient as it may take a while to load a scene.PeopleDataDatasets that are associated with this projects are available herePapers

Text to 3D Scene Generation with Rich Lexical Grounding

[pdf,

bib,

slides,

data]

Learning Spatial Knowledge for Text to 3D Scene Generation

[pdf,

bib,

data]

Interactive Learning of Spatial Knowledge for Text to 3D Scene Generation

[pdf,

bib] Related Work

WordsEye: An Automatic Text-to-Scene Conversion System

[pdf] Contact InformationFor any comments or questions, please email Angel. |